The Neural Basis of

Predicate-Argument Structure

James R Hurford,

Language Evolution and Computation Research

Unit,

Linguistics Department, University of Edinburgh

(Note: In Behavioral and Brain Sciences 23(6), 2003.

Where this online version differs from the print version, the

print version is the ``authoritative'' version.) Here are

peer commentaries on this target article. And here is my response to

those commentaries.

Keywords: logic, predicate,

argument, neural, object, dorsal, ventral, attention, deictic, reference.

Abstract

Neural correlates exist for a basic component of logical formulae, PREDICATE(x).Vision and audition research in primates and humans shows two independent neural pathways; one locates objects in body-centered space, the other attributes properties, such as colour, to objects. In vision these are the dorsal and ventral pathways. In audition, similarly separable `where' and `what' pathways exist. PREDICATE(x) is a schematic representation of the brain's integration of the two processes of delivery by the senses of the location of an arbitrary referent object, mapped in parietal cortex, and analysis of the properties of the referent by perceptual subsystems.

The brain computes actions using a few `deictic' variables pointing to objects. Parallels exist between such non-linguistic variables and linguistic deictic devices. Indexicality and reference have linguistic and non-linguistic (e.g. visual) versions, sharing the concept of attention. The individual variables of logical formulae are interpreted as corresponding to these mental variables. In computing action, the deictic variables are linked with `semantic' information about the objects, corresponding to logical predicates.

Mental scene-descriptions are necessary for practical tasks of primates, and pre-exist language phylogenetically. The type of scene-descriptions used by non-human primates would be reused for more complex cognitive, ultimately linguistic, purposes. The provision by the brain's sensory/perceptual systems of about four variables for temporary assignment to objects, and the separate processes of perceptual categorization of the objects so identified, constitute a preadaptive platform on which an early system for the linguistic description of scenes developed.

1 Introduction

This article argues for the following thesis:

- Thesis:

- Neural evidence exists for predicate-argument structure as the core of phylogenetically and ontogenetically primitive (prelinguistic) mental representations. The structures of modern natural languages can be mapped onto these primitive representations.

The idea that language is built onto pre-existing representations is common enough, being found in various forms in works such as Bickerton (1998), Kirby (2000), Kirby (1999), Hurford (2000b), Bennett (1976). Conjunctions of elementary propositions of the form PREDICATE(x) have been used by Batali as representations of conceptual structure pre-existing language in his impressive computer simulations of the emergence of syntactic structure in a population of interacting agents (Batali, 2002). Justifying such pre-existing representations in terms of neural structure and processes is relatively new.

This paper starts from a very simple component of the Fregean logical scheme, PREDICATE(x), and proposes a neural interpretation for it. This is, to my knowledge, the first proposal of a `wormhole' between the hitherto mutually isolated universes of formal logic and empirical neuroscience. The fact that it is possible to show a correlation between neural processes and logicians' conclusions about logical form is a step in the unification of science. The discoveries in neuroscience confirm that the logicians have been on the right track, that the two disciplines have something to say to each other despite their radically different methods, and that further unification may be sought. The brain having a complexity far in excess of any representation scheme dreamt up by a logician, it is to be expected that the basic PREDICATE(x) formalism is to some extent an idealization of what actually happens in the brain. But, conceding that the neural facts are messier than could be captured with absolute fidelity by any formula as simple as PREDICATE(x), I hope to show that the central ideas embodied in the logical formula map satisfyingly neatly onto certain specific neural processes.

The claim that some feature of language structure maps onto a feature of primitive mental representations needs (i) a plausible bridge between such representation and the structure of language, and (ii) a characterization of `primitive mental representation' independent of language itself, to avoid circularity. The means of satisfying the first, `bridge to language' condition will be discussed in the next subsection. Fulfilling the second condition, the bridge to brain structure and processing, establishing the language-independent validity of PREDICATE(x) as representing fundamental mental processes in both humans and non-human primates, will occupy the meat of this article (Sections 2 and 3). The article is original only in bringing together the fruits of others' labours. Neuroscientists and psychologists will be familiar with much of the empirical research cited here, but I hope they will be interested in my claims for its wider significance. Linguists, philosophers and logicians might be excited to discover a new light cast on their subject by recent neurological research.

1.1 The bridge from logic to language

The relationship between language and thought is, of course, a vast topic, and there is only space here to sketch my premises about this relationship.Descriptions of the structure of languages are couched in symbolic terms. Although it is certain that a human's knowledge of his/her language is implemented in neurons, and at an even more basic level of analysis, in atoms, symbolic representations are clearly well suited for the study of language structure. Neuroscientists don't need logical formulae to represent the structures and processes that they find. Ordinary language, supplemented by diagrams, mathematical formulae, and neologized technical nouns, verbs and adjectives, is adequate for the expression of neuroscientists' amazingly impressive discoveries. Where exotic technical notations are invented, it is for compactness and convenience, and their empirical content can always be translated into more cumbersome ordinary language (with the technical nouns, adjectives, etc.).

Logical notations, on the other hand, were developed by scholars theorizing

in the neurological dark about the structure of language and thought. Languages

are systems for the expression of thought. The sounds and written characters,

and even the syntax and phonology, of languages can also be described in

concrete ordinary language, augmented with diagrams and technical vocabulary.

Here too, invented exotic notations are for compactness and convenience; which

syntax lecturer has not paraphrased S  NP VP into ordinary English for the

benefit of a first-year class? But the other end of the language problem, the

domain of thoughts or meanings, has remained elusive to non-tautological

ordinary language description. Of course, it is possible to use ordinary

language to express thoughts --- we do it all the time. But to say that `Snow is

white' describes the thought expressed by `Snow is white' is either simply wrong

(because description of a thought process and

expression

of a thought are not equivalent) or at best

uninformative. To arrive at an informative characterization of the relation

between thought and language (assuming the relation to be other than identity),

you need some characterization of thought which does not merely mirror language.

So logicians have developed special notations for describing thought (not that

they have always admitted or been aware that that is what they were doing). But,

up to the present, the only route that one could trace from the logical

notations to any empirically given facts was back through the ordinary

language expressions which motivated them in the first place. A

neuroscientist can show you (using suitable instruments which

you implicitly trust) the synapses, spikes and neural pathways that he

investigates. But the logician cannot illuminatingly bring to your attention the

logical form of a particular natural sentence, without using the sentence

itself, or a paraphrase of it, as an instrument in his demonstration. The mental

adjustment that a beginning student of logic is forced to make, in training

herself to have the `logician's mindset', is absolutely different in kind from

the mental adjustment that a beginning student of a typical empirical science

has to make. One might, prematurely, conclude that Logic and the empirical

sciences occupy different universes, and that no wormhole connects them.

NP VP into ordinary English for the

benefit of a first-year class? But the other end of the language problem, the

domain of thoughts or meanings, has remained elusive to non-tautological

ordinary language description. Of course, it is possible to use ordinary

language to express thoughts --- we do it all the time. But to say that `Snow is

white' describes the thought expressed by `Snow is white' is either simply wrong

(because description of a thought process and

expression

of a thought are not equivalent) or at best

uninformative. To arrive at an informative characterization of the relation

between thought and language (assuming the relation to be other than identity),

you need some characterization of thought which does not merely mirror language.

So logicians have developed special notations for describing thought (not that

they have always admitted or been aware that that is what they were doing). But,

up to the present, the only route that one could trace from the logical

notations to any empirically given facts was back through the ordinary

language expressions which motivated them in the first place. A

neuroscientist can show you (using suitable instruments which

you implicitly trust) the synapses, spikes and neural pathways that he

investigates. But the logician cannot illuminatingly bring to your attention the

logical form of a particular natural sentence, without using the sentence

itself, or a paraphrase of it, as an instrument in his demonstration. The mental

adjustment that a beginning student of logic is forced to make, in training

herself to have the `logician's mindset', is absolutely different in kind from

the mental adjustment that a beginning student of a typical empirical science

has to make. One might, prematurely, conclude that Logic and the empirical

sciences occupy different universes, and that no wormhole connects them.

Despite its apparently unempirical character, logical formalism is not mere arbitrary stipulation, as some physical scientists may be tempted to believe. One logical notation can be more explanatorily powerful than another, as Frege's advances show. Frege's introduction of quantifiers binding individual variables which could be used in argument places was a great leap forward from the straightjacket of subject-predicate structure originally proposed by Aristotle and not revised for over two millennia. Frege's new notation (but not its strictly graphological form which was awfully cumbersome) allowed one to explain thoughts and inferences involving a far greater range of natural sentences. Logical representations, systematically mapped to the corresponding sentences of natural languages, clarify enormously the system underlying much human reasoning, which, without the translation to logical notation, would appear utterly chaotic and baffling.

It is necessary to note a common divergence of usage, between philosophers and linguists, in the term `subject'. For some philosophers (e.g. Strawson, 1974, 1959), a predicate in a simple proposition, as expressed by John loves Mary, for example, can have more than one `subject'; in the example given, the predicate corresponds to loves and its `subjects' to John and Mary. On this usage, the term `subject' is equivalent to `argument'. Linguists, on the other hand, distinguish between grammatical subjects and grammatical objects, and further between direct and indirect objects. Thus in Russia sold Alaska to America, the last two nouns are not subjects, but direct and indirect object respectively. The traditional grammatical division of a sentence into Subject+Predicate is especially problematic where the `Predicate' contains several NPs, semantically interpreted as arguments of the predicate expressed by the verb. Which argument of a predicate, if any, is privileged to be expressed as the grammatical subject of a sentence (thus in English typically occurring before the verb, and determining number and person agreement in the verb) is not relevant to the truth-conditional analysis of the sentence. Thus a variety of sentences such as Alaska was sold to America by Russia and It was America that was sold Alaska by Russia all describe the same state of affairs as the earlier example. The difference between the sentences is a matter of rhetoric, or appropriate presentation of information in various contextual circumstances, involving what may have been salient in the mind of the hearer or reader before encountering the sentence, or how the speaker or writer wishes to direct the subsequent discourse.

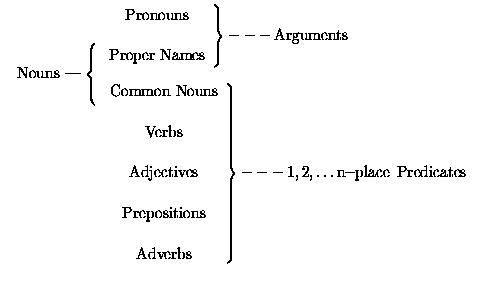

Logical predicates are expressed in natural language by words of various

parts of speech, including verbs, adjectives and common nouns. In particular,

there is no special connection between grammatical verbs and logical predicates.

The typical correspondences between the main English syntactic categories and

basic logical terms are diagrammed below.

Common nouns, used after a

copula, as man in He is a man plainly correspond to predicates. In

other positions, although they are embedded in grammatical noun phrases, as in

A man arrived, they nonetheless correspond to predicates.

The development of formal logical languages, of which first order predicate logic is the foremost example and hardiest survivor, heralds a realization of the essential distance between ordinary language and purely truth-conditional representations of `objective' situations in the world. Indeed, early generations of modern logicians, including Frege, Russell and Tarski, believed the gap between ordinary language and logical, purely truth-conditional representations to be unbridgable. Times have changed, and since Montague there have been substantial efforts to describe a systematic mapping between truth conditions and ordinary language. Ordinary language serves several purposes in addition to representation of states of affairs. My argument in this article concerns mental representations of situations in the world, as these representations existed before language, and even before communication. Thus matters involving how information is presented in externalized utterances is not our concern here. The exclusive concern here with pre-communication mental representations absolves us from responsibility to account for further cognitive properties assumed by more or less elaborate signals in communication systems, such as natural languages. For this reason also, the claims to be made here about the neural correlates of PREDICATE(x) do not relate at all directly to matters of linguistic processing (e.g. sentence parsing), as opposed to the prelinguistic representation of events and situations.

Bertrand Russell was, of course, very far from conceiving of the logical

enterprise as relating to how non-linguistic creatures represent the world. But

it might be helpful to note that Russell's kind of flat logical representations,

as in  x [KoF(x) & wise(x)] for

The king of France is wise [1], are

essentially like those assumed by Batali (2002) and focussed on in this article.

Russell's famous controversy with Strawson (Russell, 1905, 1957;

Strawson, 1950)

centered on the effect of embedding an expression for a predicate in a noun

phrase determined by the definite article. Questions of definiteness only arise

in communicative situations, with which Strawson was more concerned. A

particular object in the world is inherently neither definite nor indefinite;

only when we talk about an object do our referring noun phrases begin to have

markers of definiteness, essentially conveying ``You are already aware of this

thing''.

x [KoF(x) & wise(x)] for

The king of France is wise [1], are

essentially like those assumed by Batali (2002) and focussed on in this article.

Russell's famous controversy with Strawson (Russell, 1905, 1957;

Strawson, 1950)

centered on the effect of embedding an expression for a predicate in a noun

phrase determined by the definite article. Questions of definiteness only arise

in communicative situations, with which Strawson was more concerned. A

particular object in the world is inherently neither definite nor indefinite;

only when we talk about an object do our referring noun phrases begin to have

markers of definiteness, essentially conveying ``You are already aware of this

thing''.

The thesis proposed here is that there were, and still are, pre-communication mental representations which embody the fundamental distinction between predicates and arguments, and in which the foundational primitive relationship is that captured in logic by formulae of the kind PREDICATE(x). The novel contribution here is that the centrality of predicate-argument structure has a neural basis, adapted to a sentient organism's traffic with the world, rather than having to be postulated as `logically true' or even Platonically given. Neuroscience can, I claim, offer some informative answers to the question of where elements of logical form came from.

The strategy here is to assume that a basic element of first order predicate logic notation, PREDICATE(x), suitably embedded, can be systematically related to natural language structures, in the ways pursued by recent generations of formal semanticists of natural language, for example, Montague (1970, 1973), Parsons (1990), Kamp and Reyle (1993). The hypothesis here is not that all linguistic structure derives from prelinguistic mental representations. I argue elsewhere (Hurford, 2002) that in fact very little of the rich structure of modern languages directly mirrors any mental structure pre-existing language.

In generative linguistics, such terms as `deep structure' and `surface structure', `logical form' and `phonetic form' have specialized theory-internal meanings, but the basic insight inherent in such terminology is that linguistic structure is a mapping between two distinct levels of representation. In fact, most of the complexity in language structure belongs to this mapping, rather than to the forms of the anchoring representations themselves. In particular, the syntax of logical form is very simple. All of the complexities of phonological structure belong to the mapping between meaning and form, rather than to either meaning or form per se. A very great proportion of morphosyntactic structure clearly also belongs to this mapping --- components such as word-ordering, agreement phenomena, anaphoric marking, most syntactic category distinctions (e.g. noun, verb, auxiliary, determiner) which have no counterparts in logic, and focussing and topicalization devices. In this respect, the view taken here differs significantly from Bickerton's (in Calvin and Bickerton (2000) that modern grammar in all its glory can be derived, with only a few auxiliary assumptions, from the kind of mental representations suitable for cheater detection that our prelinguistic ancestors would have been equipped with; see Hurford (2002) for a fuller argument.

Therefore, to argue, as I will in this paper, that a basic component of the representation of meaning pre-exists language and can be found in apes, monkeys and possibly other mammals, leaves most of the structure of language (the complex mappings of meanings to phonetic signals) still unexplained in evolutionary terms. To argue that apes have representations of the form PREDICATE(x) does not make them out to be language-capable humans. Possession of the PREDICATE(x) form of representation is evidently not sufficient to propel a species into full-blown syntactic language. There is much more to human language than predicate-argument structure, but predicate-argument structure is the semantic foundation on which all the rest is built.

The view developed here is similar in its overall direction to that taken by Bickerton (1990). Bickerton argues for a `primary representation system (PRS)' existing in variously developed forms in all higher animals. ``In all probability, language served in the first instance merely to label protoconcepts derived from prelinguistic experience'' (91). This is entirely consistent with the view proposed here, assuming that what I call `prelinguistic mental predicates' are Bickerton's `protoconcepts'. Bickerton also believes, as I do, that the representation systems of prelinguistic creatures have predicate-argument structure. Bickerton further suggests that, even before the emergence of language, it is possible to distinguish subclasses of mental predicates along lines that will eventually give rise to linguistic distinctions such as Noun/Verb. He argues that ``[concepts corresponding to] verbs are much more abstract that [those corresponding to] nouns'' (98). I also believe that a certain basic functional classification of predicates can be argued to give rise to the universal linguistic categories of Noun and Verb. But that subdivision of the class of predicates is not my concern here. Here the focus is on the more fundamental issue of the distinction between predicates and their arguments. So this paper is not about the emergence of Noun/Verb structure (which is a story that must wait for another day). (Batali's (2002) impressive computer simulations of the emergence of some aspects of natural language syntax start from conjunctions of elementary formulae in PREDICATE(x) form, but it is notable that they do not arrive at anything corresponding to a Noun/Verb distinction.)

On top of predicate-argument structure, a number of other factors need to come together for language to evolve. Only the sketchiest mention will be given of such factors here, but they include (a) the transition from private mental representations to public signals; (b) the transition from involuntary to voluntary control; (c) the transition from epigenetically determined to learned and culturally transmitted systems; (d) the convergence on a common code by a community; (e) the evolution of control of complex hierarchically organized signalling behaviour (syntax); (f) the development of deictic here-and-now talk into definite reference and proper naming capable of evoking events and things distant in time and space. It is surely a move forward in explaining the evolution of language to be able to dissect out the separate steps that must be involved, even if these turn out to be more dauntingly numerous than was previously thought. (In parallel fashion, the discovery of the structure of DNA immediately posed problems of previously unimagined complexity to the next generation of biologists.)

1.2 Prelinguistic predicates

In the view adopted here, a predicate corresponds, to a first approximation, to a judgement that a creature can make about an object. Some predicates are relatively simple. For a simple predicate, the senses provide the brain with input allowing a decision with relatively little computation. On a scale of complexity, basic colour predicates are near the simple end, while predicates paraphrasable as sycamore or weasel are much more complex. Mentally computing the applicability of complex predicates often involves simpler predicates, hence relatively more computation.Some ordinary languages predicates, such as big, depend for their interpretation on the prior application of other predicates. Generically speaking, a big flea is not big; this is no contradiction, once it is admitted that the sentence implicitly establishes two separate contexts for the application of the adjective big. There is `big, generically speaking', i.e. in the context of consideration of all kinds of objects and of no one kind of object in particular; and there is `big for a flea'. This is semantic modulation. Such modulation is not a solely linguistic phenomenon. Many of our higher-level perceptual judgements are modulated in a similar way. An object or substance characterized by its whitish colour (like chalk) reflects bright light in direct sunlight, but a light of lower intensity in the shade at dusk. Nevertheless, the brain, in both circumstances, is able to categorize this colour as whitish, even though the lower intensity of light is reflected by a greyish object or substance (like slate) in direct sunlight. In recognizing a substance as whitish or greyish, the brain adjusts to the ambient lighting environment. Viewing chalk in poor light, the visual system returns the judgement `Whitish, for poor light'; in response to light of the same intensity, as when viewing slate in direct sunlight, the visual system returns the judgement `Greyish, for broad daylight'. A similar example can be given from speech perception. In a language such as Yoruba, with three level lexical tones, high, mid and low, a single word spoken by an unknown speaker cannot reliably be recognized as on a high tone spoken by a man or a low or mid tone spoken by a woman or child. But as soon as a few words are spoken, the hearer recognizes the appropriate tones in the context of the overall pitch range of the speaker's voice. Thus the ranges of external stimuli which trigger a mental predicate may vary, systematically, as a function of other stimuli present.

This article will be mainly concerned with 1-place predicates, arguing that they correspond to perceived properties. There is no space here to present a fully elaborated extension of the theory to predicates of degree greater than 1, but a few suggestive remarks may convince a reader that in principle the theory may be extendable to n-place predicates (n > 1).

Prototypical events or situations involving 2-place predicates are described

by John kicked Fido (an event) or The cat is on the mat (a

situation). Here I will take it as given that observers perceive events or

situations as unified wholes; there is some psychological reality to the concept

of an atomic event or situation. In a 2-place predication (barring predicates

used reflexively), the two participant entities involved in the event or

situation also have properties. In formal logic, it is possible to write a

formula such as  x

x  y [kick(x, y)], paraphrasable as Something

kicks something. But I claim that it is never possible for an observer to

perceive an event of this sort without also being able to make some different

1-place judgements about the participants. Perhaps the most plausible potential

counterexample to this claim would be reported as I feel something. Now

this could be intended to express a 1-place state, as in I am hungry; but

if it is genuinely intended as a report of an experience involving an entity

other than the experiencer, I claim that there will always be some (1-place)

property of this entity present to the mind of the reporter. That is, the

`something' which is felt will always be felt as having some property, such as

sharpness, coldness or furriness. Expressed in terms of a psychologically

realistic logical language enhanced by meaning postulates, this amounts to the

claim that every 2-place predicate occurs in the implicans of some

meaning postulate whose implicatum includes 1-place predicates applicable

to its arguments. The selectional restrictions expressed in some generative

grammars provide good examples; the subject of drink must be animate, the

object of drink must be a liquid.

y [kick(x, y)], paraphrasable as Something

kicks something. But I claim that it is never possible for an observer to

perceive an event of this sort without also being able to make some different

1-place judgements about the participants. Perhaps the most plausible potential

counterexample to this claim would be reported as I feel something. Now

this could be intended to express a 1-place state, as in I am hungry; but

if it is genuinely intended as a report of an experience involving an entity

other than the experiencer, I claim that there will always be some (1-place)

property of this entity present to the mind of the reporter. That is, the

`something' which is felt will always be felt as having some property, such as

sharpness, coldness or furriness. Expressed in terms of a psychologically

realistic logical language enhanced by meaning postulates, this amounts to the

claim that every 2-place predicate occurs in the implicans of some

meaning postulate whose implicatum includes 1-place predicates applicable

to its arguments. The selectional restrictions expressed in some generative

grammars provide good examples; the subject of drink must be animate, the

object of drink must be a liquid.

In the case of asymmetric predicates, the asymmetry can always be expressed in terms of one participant in the event or situation having some property which the other lacks. And, I suggest, this treatment is psychologically plausible. In cases of asymmetric actions, as described by such verbs as hit and eat, the actor has the metaproperty of being the actor, cashed out in more basic properties such as movement, animacy and appearance of volition. Likewise, the other, passive, participant is typically characterized by properties such as lack of movement, change of state, inanimacy and so forth (see Cruse (1973) and Dowty (1991) for relevant discussion). Cases of asymmetric situations, such as are involved in spatial relations as described by prepositions such as on, in and under, are perhaps less obviously treatable in this way. Here, I suggest that properties involving some kind of perceptual salience in the given situation are involved. In English, while both sentences are grammatical, The pen is on the table is commonplace, but The table is under the pen is studiously odd. I would suggest that an object described by the grammatical subject of on has a property of being taken in as a whole object comfortably by the eye, whereas the other object involved lacks this property and is perceived (on the occasion concerned) rather as a surface than as a whole object.

In the case of symmetric predicates, as described by fight each other or as tall as, the arguments are not necessarily distinguished by any properties perceived by an observer.

I assume a version of event theory (Parsons, 1990,; Davidson, 1980), in which

the basic ontological elements are whole events or situations, annotated as

e, and the participants of these events, typically no more than about

three, annotated as x, y and z. For example, the event described

by A man bites a dog could be represented as  e, x, y, bite(e), man(x), dog(y), agent(x),

patient(y). In clumsy English, this corresponds to `There is a biting event

involving a man and a dog, in which the man is the active volitional

participant, and the dog is the passive participant.' The less newsworthy event

would be represented as

e, x, y, bite(e), man(x), dog(y), agent(x),

patient(y). In clumsy English, this corresponds to `There is a biting event

involving a man and a dog, in which the man is the active volitional

participant, and the dog is the passive participant.' The less newsworthy event

would be represented as  e, x, y, bite(e),

man(x), dog(y), agent(y), patient(x). The situation described by The pen

is on the table could be represented as

e, x, y, bite(e),

man(x), dog(y), agent(y), patient(x). The situation described by The pen

is on the table could be represented as  e, x, y, on(e), pen(x), table(y),

small_object(x), surface(y).

e, x, y, on(e), pen(x), table(y),

small_object(x), surface(y).

In this enterprise it is important to realize the great ambiguity of many ordinary language words. The relations expressed by English on in An elephant sat on a tack and in A book lay on a table are perceptually quite different (though they also have something in common). Thus there are at least several mental predicates corresponding to ordinary language words. When in the histories of natural languages, words change their meanings, the overt linguistic forms become associated with different mental predicates. The predicates which I am concerned with here are prelinguistic mental predicates, and are not to be simply identified with words.

Summarizing these notes, it is suggested that it may be possible to sustain the claim that n-place predicates (n > 1) are, at least in perceptual terms, constructible from 1-place predicates. The core of my argument in this article concerns formulae of the form PREDICATE(x), i.e. 1-place predications. My core argument in this article does not stand or fall depending on the correctness of these suggestions about n > 1-place predicates. If the suggestions about n > 1-place predicates are wrong, then the core claim is limited to 1-place predications, and some further argument will need to be made concerning the neural basis of n > 1-place predications. A unified theory relating all logical predicates to the brain is methodologically preferable, so there is some incentive to pursue the topic of n > 1-place predicates.

1.3 Individual variables as prelinguistic arguments

Here are two formulae of first order predicate logic (FOPL), with their

English translations.

CAME(john)

(Translation: `John came')

(Translation: `John came') x[TALL(x) & MAN(x) & CAME(x)]

x[TALL(x) & MAN(x) & CAME(x)]

(Translation: `A tall man came')

(Translation: `A tall man came')

The canonical fillers of the argument slots in predicate logic formulae are constants denoting individuals, corresponding roughly to natural language proper names. In the more traditional schemes of semantics, no distinction between extension and intension is made for proper names. On many accounts, proper names have only extensions (namely the actual individuals they name), and do not have intensions (or `senses'). ``What is probably the most widely accepted philosophical view nowadays is that they [proper names] may have reference, but not sense.'' (Lyons, 1977:219) ``Dictionaries do not tell us what [proper] names mean --- for the simple reason that they do not mean anything'' (Ryle, 1957) In this sense, the traditional view has been that proper names are semantically simpler than predicates. More recent theorizing has questioned that view.

In a formula such as CAME(john), the individual constant argument term is interpreted as denoting a particular individual, the very same person on all occasions of use of the formula. FOPL stipulates by fiat this absolutely fixed relationship between an individual constant and a particular individual entity. Note that the denotation of the term is a thing in the world, outside the mind of any user of the logical language. It is argued at length by Hurford (2001) that the mental representations of proto-humans could not have included terms with this property. Protothought had no equivalent of proper names. Control of a proper name in the logical sense requires Godlike omniscience. Creatures only have their sense organs to rely on when attempting to identify, and to reidentify, particular objects in the world. Where several distinct objects, identical to the senses, exist, a creature cannot reliably tell which is which, and therefore cannot guarantee control of the fixed relation between an object and its proper name that FOPL stipulates. It's no use applying the same name to each of them, because that violates the requirement that logical languages be unambiguous. More detailed arguments along these lines are given in Hurford (2001, 1999), but it is worth repeating here the counterargument to the most common objection to this idea. It is commonly asserted that animals can recognize other animals in their groups.

``The following quotation demonstrates the prima facie attraction of the impression that animals distinguish such individuals, but simultaneously gives the game away.The logical notion of an individual constant permits no degree of tolerance over the assignment of these logical constants to individuals; this is why they are called `constants'. It is an a priori fiat of the design of the logical language that individual constants pick out particular individuals with absolute consistency. In this sense, the logical language is practically unrealistic, requiring, as previously mentioned, Godlike omniscience on the part of its users, the kind of omniscience reflected in the biblical line ``But even the very hairs of your head are all numbered'' (Matthew, Ch.10).`The speed with which recognition of individual parents can be acquired is illustrated by the `His Master's Voice' experiments performed by Stevenson et al. (1970) on young terns: these responded immediately to tape-recordings of their own parents (by cheeping a greeting, and walking towards the loudspeaker) but ignored other tern calls, even those recorded from other adult members of their own colony.' (Walker, 1983:215)Obviously, the tern chicks in the experiment were not recognizing their individual parents --- they were being fooled into treating a loudspeaker as a parent tern. For the tern chick, anything which behaved sufficiently like its parent was `recognized' as its parent, even if it wasn't. The tern chicks were responding to very finely-grained properties of the auditory signal, and apparently neglecting even the most obvious of visual properties discernible in the situation. In tern life, there usually aren't human experimenters playing tricks with loudspeakers, and so terns have evolved to discriminate between auditory cues just to the extent that they can identify their own parents with a high degree of reliability. Even terns presumably sometimes get it wrong. ` ... animals respond in mechanical robot-like fashion to key stimuli. They can usually be `tricked' into responding to crude dummies that resemble the true, natural stimulus situation only partially, or in superficial respects.' (Krebs and Dawkins, 1984:384) '' (Hurford, 2001)

Interestingly, several modern developments in theorizing about predicates and their arguments complicate the traditional picture of proper names, the canonical argument terms. The dominant analysis in the modern formal semantics of natural languages (e.g. Montague (1970), Montague (1973)) does not treat proper names in languages (e.g. John) like the individual constants of FOPL. For reasons having to do with the overall generality of the rules governing the compositional interpretation of all sentences, modern logical treatments make the extensions of natural language proper names actually more complex than, for example, the extensions of common nouns, which are 1-place predicates. In such accounts, the extension of a proper name is not simply a particular entity, but the set of classes containing that entity, while the extension of a 1-place predicate is a class. Concretely, the extension of cat is the class of cats, while the extension of John is the set of all classes containing John.

Further, it is obvious that in natural languages, there are many kinds of expressions other than proper names which can fill the NP slots in clauses.

`` Semantically then PNs are an incredibly special case of NP; almost nothing that a randomly selected full NP can denote is also a possible proper noun denotation. This is surprising, as philosophers and linguists have often treated PNs as representative of the entire class of NPs. Somewhat more exactly, perhaps, they have treated the class of full NPs as representable ... by what we may call individual denoting NPs.'' (Keenan (1987:464))

This fact evokes one of two responses in logical accounts. The old-fashioned way was to deny that there is any straightforward correspondence between natural language clauses with non-proper-name subjects or objects and their translations in predicate logic (as Russell (1905) did). The modern way is to complicate the logical account of what grammatical subjects (and objects), including proper names, actually denote (as Montague did).

In sum, logical formulae of the type CAME(john), containing individual constants, cannot be plausibly claimed as corresponding to primitive mental representations pre-existing human language. The required fixing of the designations of the individual constants (`baptism' in Kripke (1980)'s terms) could not be practically relied upon. Modern semantic analysis suggests that natural language proper names are in fact more complex than longer noun phrases like the man, in the way they fit into the overall compositional systems of modern languages. And while proper names provide the shortest examples of (non-pronominal) noun phrases, and hence are convenient for brief expository examples, they are in fact somewhat peripheral in their semantic and syntactic properties.

Such considerations suggest that, far from being primitive, proper names are more likely to be relatively late developments in the evolution of language. In the historical evolution of individual languages, proper names are frequently, and perhaps always, derived from definite descriptions, as is still obvious from many, e.g. Baker, Wheeler, Newcastle. It is very rare for languages to lack proper names, but such languages do exist. Machiguenga (or Matsigenka), an Arawakan language, is one, as several primary sources (Snell, 1964; Johnson, 2003) testify.

``A most unusual feature of Matsigenka culture is the near absence of personal names (W. Snell 1964: 17-25). Since personal names are widely regarded by anthropologists as a human universal (e.g. Murdock 1960: 132), this startling assertion is likely to be received with skepticism. When I first read Snells discussion of the phenomenon, before I had gone into the field myself, I suspected that he had missed something (perhaps the existence of secret ceremonial names) despite his compelling presentation of evidence and his conclusion:`I have said that the names of individual Machiguenga, when forthcoming, are either of Spanish origin and given to them by the white man, or nicknames. We have known Machiguenga Indians who reached adulthood and died without ever having received a name or any other designation outside of the kinship system. ... Living in small isolated groups there is no imperative need for them to designate each other in any other way than by kinship terminology. Although there may be only a few tribes who do not employ names, I conclude that the Machiguenga is one of those few (W. Snell 1964: 25).Experience has taught me that Snell was right. Although the Matsigenka of Shimaa did learn the Spanish names given them, and used them in instances where it was necessary to refer to someone not of their family group, they rarely used them otherwise and frequently forgot or changed them. (Johnson, 2003)

Joseph Henrich, another researcher on Machiguenga tells me ``This is a well established fact among Machiguenga researchers.'' (personal communication).

In this society there is very little cooperation, exchange or sharing beyond the family unit. This insularity is reflected in the fact that until recently they didn't even have personal names, referring to each other simply as `father, `patrilineal same-sex cousin' or whatever.'' (Douglas, 2001:41)

The social arrangements of our prelinguistic ancestors probably involved no cooperation, exchange or sharing beyond the family unit, and the mental representations which they associated with individuals could well have been kinship predicates or other descriptive predicates.

In Australian languages, people are usually referred to by descriptive predicates.

``Each member of a tribe will also have a number of personal names, of different types. They may be generally known by a nickname, describing some incident in which they were involved or some personal habit or characteristic e.g. `[she who] knocked the hut over', `[he who] sticks out his elbows when walking', `[she who] runs away when a boomerang is thrown', `[he who] has a damaged foot'. But each individual will also have a sacred name, generally given soon after birth.'' (Dixon, 1980:27)

The extensive anthropological literature on names testifies to the very special status, in a wide range of cultures, of such sacred or `baptismal' proper names, both for people and places. It is common for proper names to be used with great reluctance, for fear of giving offense or somehow intruding on a person's mystical selfhood. A person's proper name is sometimes even a secret.

``the personal names by which a man is known are something more than names. Native statements suggest that names are thought to partake of the personality which they designate. The name seems to bear much the same relation to the personality as the shadow or image does to the sentient body.'' (Stanner, 1937, quoted in Dixon, 1980:28)It is hard to see how such mystical beliefs can have become established in the minds of creatures without language. More probably, it was only early forms of language itself that made possible such elaborate responses to proper names.

Hence, it is unlikely that any primitive mental representation contained any equivalent of a proper name, i.e. an individual constant. We thus eliminate formulae of the type of CAME(john) as candidates for primitive mental representations.

This leaves us with quantified formulae, as in  x [MAN(x) & TALL(x)]. Surely we

can discount the universal quantifier

x [MAN(x) & TALL(x)]. Surely we

can discount the universal quantifier  as a term in primitive mental

representations. What remains is one quantifier, which we can take to be

implicitly present and to bind the variable arguments of predicates. I propose

that formulae of the type PREDICATE(x) are evolutionarily primitive

mental representations, for which we can find evidence outside language.

as a term in primitive mental

representations. What remains is one quantifier, which we can take to be

implicitly present and to bind the variable arguments of predicates. I propose

that formulae of the type PREDICATE(x) are evolutionarily primitive

mental representations, for which we can find evidence outside language.

2 Neural correlates of PREDICATE(x)

It is high time to mention the brain. In terms of neural structures and processes, what justification is there for positing representations of the form PREDICATE(x) inside human heads? I first set out some groundrules for correlating logical formulae, defined denotationally and syntactically, with events in the brain.

Representations of the form PREDICATE(x) are here interpreted psychologistically; specifically, they are taken to stand for the mental events involved when a human attends to an object in the world and classifies it perceptually as satisfying the predicate in question. In this psychologistic view, it seems reasonable to correlate denotation with stimulus. Denotations belong in the world outside the organism; stimuli come from the world outside a subject's head. A whole object, such as a bird, can be a stimulus. Likewise, the properties of an object, such as its colour or shape, can be stimuli.

The two types of term in the PREDICATE(x) formula differ in their denotations. An individual variable does not have a constant denotation, but is assigned different denotations on different occasions of use; and the denotation assigned to such a variable is some object in the world, such as a particular bird, or a particular stone or a particular tree. A predicate denotes a constant property observable in the world, such as greenness, roundness, or the complex property of being a certain kind of bird. The question to be posed to neuroscience is whether we can find separate neural processes corresponding to (1) the shifting, ad hoc assignment of a `mental variable' to different stimulus objects in the world, not necessarily involving all, or even many, of the objects' properties, and (2) the categorization of objects, once they instantiate mental object variables, in terms of their properties, including more immediate perceptual properties, such as colour, texture, and motion, and more complex properties largely derived from combinations of these.

The syntactic structure of the PREDICATE(x) formula combines the two types of term into a unified whole capable of receiving a single interpretation which is a function of the denotations of the parts; this whole is typically taken to be an event or a state of affairs in the world. The bracketing in the PREDICATE(x) formula is not arbitrary: it represents an asymmetric relationship between the two types of information represented by the variable and the predicate terms. Specifically, the predicate term is understood in some sense to operate on, or apply to, the variable, whose value is provided beforehand. The bracketing in the PREDICATE(x) formula is the first, lowest-level, step in the construction of complex hierarchical semantic structures, as provided, for example, in more complex formulae of FOPL. The innermost brackets in a FOPL formula are always those separating a predicate from its arguments. If we can find separate neural correlates of individual variables and predicate constants, then the question to be put to neuroscience about the validity of the whole formula is whether the brain actually at any stage applies the predicate (property) system to the outputs of the object variable system, in a way that can be seen as the bottom level of complex, hierarchically organized brain activity.

2.1 Separate locating and identifying components in vision and hearing

The evidence cited here is mainly from vision. Human vision is the most complex of all sensory systems. About a quarter of human cerebral cortex is devoted to visual analysis and perception. There is more research on vision relevant to our theme, but some work on hearing has followed the recent example of vision research and arrived at similar conclusions.

2.1.1 Dorsal and ventral visual streams

Research on the neurology of vision over the past two decades has reached two important broad conclusions. One important message from the research is that vision is not a single unified system: perceiving an object as having certain properties is a complex process involving clearly distinguishable pathways, and hence processes, in the brain (seminal works are Trevarthen (1968), Ungerleider and Mishkin (1982), Goodale and Milner (1992)).

The second important message from this literature, as argued for instance by Milner and Goodale (1995), is that much of the visual processing in any organism is inextricably linked with motor systems. If we are to carve nature at her joints, the separation of vision from motor systems is in many instances untenable. For many cases, it is more reasonable to speak of a number of visuomotor systems. Thus frogs have distinct visuomotor systems for orienting to and snapping at prey, and for avoiding obstacles when jumping (Ingle, 1973, 1980, 1982). Distinct neural pathways from the frog's retina to different parts of its brain control these reflex actions.

Distinct visuomotor systems can similarly be identified in mammals:

``In summary, the modular organization of visuomotor behaviour in representative species of at least one mammalian order, the rodents, appears to resemble that of much simpler vertebrates such as the frog and toad. In both groups of animals, visually elicited orienting movements, visually elicited escape, and visually guided locomotion around barriers are mediated by quite separate pathways from the retina right through to motor nuclei in the brainstem and spinal cord. This striking homology in neural architecture suggests that modularity in visuomotor control is an ancient (and presumably efficient) characteristic of vertebrate brains.'' (Milner and Goodale (1995):18-19)

Coming closer to our species, a clear consensus has emerged in primate (including human) vision research that one must speak of (at least) two separate neural pathways involved in the vision-mediated perception of an object. The literature is centred around discussion of two related distinctions, the distinction between magno and parvo channels from the retina to the primary visual cortex (V1) (Livingstone and Hubel, 1988), and the distinction between dorsal and ventral pathways leading from V1 to further visual cortical areas (Ungerleider and Mishkin (1982), Mishkin et al. (1983)). These channels and pathways function largely independently, although there is some crosstalk between them (Merigan et al. (1991), Van Essen et al. (1992)) , and in matters of detail there is, naturally, complication (e.g. Johnsrude et al. (1999), Hendry and Yoshioka (1994), Marois etal. (2000)) and some disagreement (e.g. Franz et al. (2000), Merigan and Maunsell (1993), Zeki (1993)). See Milner and Goodale (1995:33-39, 134-136) for discussion of the magno/parvo-dorsal/ventral relationship. (One has to be careful what one understands by `modular' when quoting Milner and Goodale (1995). In real brains modules are neural entities that modulate, compete and cooperate, rather than being encapsulated processors for one ``faculty'' (Arbib, 1987)). It will suffice here to collapse under the label `dorsal stream' two separate pathways from the retina to posterior parietal cortex; one route passes via the lateral geniculate nucleus and V1, and the other bypasses V1 entirely, passing through the superior colliculus and pulvinar. (See Milner and Goodale (1995:68).) While it is not obvious that both divergences pertain to the same functional role, the proposals made here are not so detailed or subtle as to suggest any relevant discrimination between these two branches of the route from retina to parietal cortex. The dorsal stream has been characterized as the `where' stream, and the ventral stream as the `what' stream. The popular `where' label can be misleading, suggesting a single system for computing all kinds of spatial location; as we shall see, a distinction must be made between the computing of egocentric (viewer-centred) locational information and allocentric (other-centred) locational information. Bridgeman et al. (1979) use the preferable terms `cognitive' (for `what' information) and `motor-oriented' (for `where' information). Another suitable mnemonic might be the `looking' stream (dorsal) and the `seeing' stream (ventral). Looking is a visuomotor activity, involving a subset of the information from the retina controlling certain motor responses such as eye-movement, head and body orientation and manual grasping or pointing. Seeing is a perceptual process, allowing the subject to deploy other information from the retina to ascribe certain properties, such as colour and motion, to the object to which the dorsal visuomotor looking system has already directed attention.

`` ... appreciation of an object's qualities and of its spatial location depends on the processing of different kinds of visual information in the inferior temporal and posterior parietal cortex, respectively.'' (Ungerleider and Mishkin (1982):578)

`` ... both cortical streams process information about the intrinsic properties of objects and their spatial locations, but the transformations they carry out reflect the different purposes for which the two streams have evolved. The transformations carried out in the ventral stream permit the formation of perceptual and cognitive representations which embody the enduring characteristics of objects and their significance; those carried out in the dorsal stream, which need to capture instead the instantaneous and egocentric features of objects, mediate the control of goal-directed actions.'' (Milner and Goodale (1995):65-66)Figure 1 shows the separation of dorsal and ventral pathways in schematic form

Figure 1. [From Milner

and Goodale (1995).] Schematic diagram showing major routes whereby retinal

input reaches dorsal and ventral streams. The inset [brain drawing] shows the

cortical projections on the right hemisphere of a macaque brain. LGNd, lateral

geniculate nucleus, pars dorsalis; Pulv, pulvinar nucleus; SC, superior

colliculus.

Experimental and pathological data support the distinction between visuo-perceptual and visuomotor abilities.

Patients with cortical blindness, caused by a lesion to the visual cortex in the occipital lobe, sometimes exhibit `blindsight'. Sometimes the lesion is unilateral, affecting just one hemifield, sometimes bilateral, affecting both; presentation of stimuli can be controlled experimentally, so that conclusions can be drawn equally for partially and fully blind patients. In fact, paradoxically, patients with the blindsight condition are never strictly `fully' blind, even if both hemifields are fully affected. Such patients verbally disclaim ability to see presented stimuli, and yet they are able to carry out precisely guided actions such as eye-movement, manual grasping and `posting' (into slots). (See Goodale et al. (1994), Marcel (1998), Milner and Goodale (1995), Sanders et al. (1974), Weiskrantz (1986), Weiskrantz (1997). See also Ramachandran and Blakeslee (1998) for a popular account).

These cited works on blindsight conclude that the spared unconscious abilities in blindsight patients are those identifying relatively low-level features of a `blindly seen' object, such as its size and distance from the observer, while access to relatively higher-level features such as colour and some aspects of motion is impaired [2]. Classic blindsight cases arise with humans, who can report verbally on their inability to see stimuli, but parallel phenomena can be tested and observed in non-humans. Moore et al. (1998) summarize parallels between residual vision in monkeys and humans with damage to V1.

A converse to the blindsight condition has also been observed, indicating a double dissociation between visually-directed grasping and visual discrimination of objects. Goodale et al.'s patient RV could discriminate one object from another, but was unable to use visual information to grasp odd-shaped objects accurately (Goodale et al. (1994)). Experiments with normal subjects also demonstrate a mismatch between verbally reported visual impressions of the comparative size of objects and visually-guided grasping actions. In these experiments, subjects were presented with a standard size-illusion-generating display, and asserted (incorrectly) that two objects differed in size; yet when asked to grasp the objects, they spontaneously placed their fingers exactly the same distance apart for both objects (Aglioti et al. (1995)). Aglioti et al.'s conclusions have recently been called into question by Franz et al. (2000); see the discussion by Westwood et al. (2000) for a brief up-to-date survey of nine other studies on this topic.

Advances in brain-imaging technology have made it possible to confirm in non-pathological subjects the distinct localizations of processing for object recognition and object location (e.g. Aguirre and D'Esposito (1997) and other studies cited in this paragraph). Haxby et al. (1991), while noting the homology between humans and nonhuman primates in the organization of cortical visual systems into ``what'' and ``where'' processing streams, also note some displacement, in humans, in the location of these systems due to development of phylogenetically newer cortical areas. They speculate that this may have ramifications for ``functions that humans do not share with nonhuman primates, such as language.'' Similar homology among humans and nonhuman primates, with some displacement of areas specialized for spatial working memory in humans, is noted by Ungerleider et al. (1998), who also speculate that this displacement is related to the emergence of distinctively human cognitive abilities.

The broad separation of visual pathways into ventral and dorsal has been tested against performance on a range of spatial tasks in normal individuals (Chen et al. (2000)). Seven spatial tasks were administered, of which three ``were constructed so as to rely primarily on known ventral stream functions and four were constructed so as to rely primarily on known dorsal stream functions'' (380) For example, a task where subjects had to make a same/different judgement on pairs of random irregular shapes was classified as a task depending largely on the ventral stream; and a task in which ``participants had to decide whether two buildings in the top view were in the same locations as two buildings in the side view'' (383) was classified as depending largely on the dorsal stream. These classifications, though subtle, seem consistent with the general tenor of the research reviewed here, namely that recognition of the properties of objects is carried out via the ventral stream and the spatial location of objects is carried out via the dorsal stream. After statistical analysis of the performance of forty-eight subjects on all these tasks, Chen et al. conclude

`` ... the specialization for related functions seen within the ventral stream and within the dorsal stream have direct behavioral manifestations in normal individuals. ... at least two brain-based ability factors, corresponding to the functions of the two processing streams, underlie individual differences in visuospatial information processing.'' (Chen et al. (2000):386)Chen et al. speculate that the individual differences in ventral and dorsal abilities have a genetic basis, mentioning interesting links with Williams syndrome (Bellugi et al. (1988), Frangiskakis et al. (1996)).

Milner (1998) gives a brief but comprehensive overview of the evidence, up to 1998, for separate dorsal and ventral streams in vision. For my purposes, Pylyshyn (2000) sums it up best:

`` ... the most primitive contact that the visual system makes with the world (the contact that precedes the encoding of any sensory properties) is a contact with what have been termed visual objects or proto-objects ... As a result of the deployment of focal attention, it becomes possible to encode the various properties of the visual objects, including their location, color, shape and so on.'' (Pylyshyn (2000):206)

2.1.2 Auditory location and recognition

Less research has been done on auditory systems than on vision. There are recent indications that a dissociation exists between the spatial location of the source of sounds and recognition of sounds, and that these different functions are served by separate neural pathways.

Rauschecker (1997), Korte and Rauschecker (1993) and Tian and Rauschecker (1998) investigated the responses of single neurons in cats to various auditory stimuli. Rauschecker concludes

``The proportion of spatially tuned neurons in the AE [= anterior ectosylvian] and their sharpness of tuning depends on the sensory experience of the animal. This and the high incidence of spatially tuned neurons in AE suggests that the anterior areas could be part of a `where' system in audition, which signals the location of sound. By contrast, the posterior areas of cat auditory cortex could be part of a `what' system, which analyses what kind of sound is present.'' (Rauschecker (1997):35)Rauschecker suggests that there could be a similar functional separation in monkey auditory cortex.

Romanski et al. (1999) have considerably extended these results in a study on macaques using anatomical tracing of pathways combined with microelectrode recording. Their study reveals a complex network of connections in the auditory system (conveniently summarized in a diagram by Kaas and Hackett (1999)). Within this complex network it is possible to discern two broad pathways, with much cross-talk between them but nevertheless somewhat specialized for separate sound-localization and higher auditory processing, respectively. The sound localization pathway involves some of the same areas that are centrally involved in visual localization of stimuli, namely dorsolateral prefrontal cortex and posterior parietal cortex. Kaas and Hackett (1999), in their commentary, emphasize the similarities between visual, auditory and somatosensory systems each dividing along `what' versus `where' lines[3]. Graziano et al. (1999) have shown that certain neurons in macaques have spatial receptive fields limited to about 30cm around the head of the animal, thus contributing to a specialized sound-location system.

Coming to human audition, Clarke et al. (2000) tested a range of abilities in four patients with known lesions, concluding

``Our observation of a double dissociation between auditory recognition and localisation is compatible with the existence of two anatomically distinct processing pathways for non-verbal auditory information. We propose that one pathway is involved in auditory recognition and comprises lateral auditory areas and the temporal convexity. The other pathway is involved in auditory-spatial analysis and comprises posterior auditory areas, the insula and the parietal convexity.'' (Clarke et al. (2000):805)

Evidence from audition is less central to my argument than evidence from vision. My main claim is that in predicate-argument structure, the predicate represents some judgement about the argument, which is canonically an attended-to object. There is a key difference between vision and hearing. What is seen is an object, typically enduring; what is heard is an event, typically fleeting. If language is any guide (which it surely is, at least approximately) mental sound predicates can be broadly subdivided into those which simply classify the sound itself (rendered in English with such words as bang, rumble, rush), and those which also classify the event or agent which caused the sound (expressed in English by such words as scrape, grind, whisper, moan, knock, tap). (Perhaps this broad dichotomy is more of a continuum.) When one hears a sound of the first type, such as a bang, there is no object, in the ordinary sense of `object', which `is the bang'. A bang is an ephemeral event. One cannot attend to an isolated bang in the way in which one directs one's visual attention to an enduring object. The only way one can simulate attention to an isolated bang is by trying to hold it in memory for as long as possible. This is quite different from maintained visual attention which gives time for the ventral stream to do heavy work categorizing the visual stimuli in terms of complex properties. Not all sounds are instantaneous, like bangs. One can notice a continuous rushing sound. But again, a rushing sound is not an object. Logically, it seems appropriate to treat bangs and rushing sounds either with zero-place predicates, i.e. as predicates without arguments, or as predicates taking event variables as arguments. (The exploration of event-based logics is a relatively recent development.) English descriptions such as There was a bang or There was a rushing tend to confirm this.

Sounds of the second type, classified in part by what (probably) caused them, allow the hearer to postulate the existence of an object to which some predicate applies. If, for example, you hear a miaow, you mentally classify this sound as a miaow. This, as with the bang or the rushing sound, is the evocation of a zero-place predicate (or alternatively a predicate taking an event variable as argument). Certainly, hearing a miaow justifies you in inferring that there is an object nearby satisfying certain predicates, in particular CAT(x). But is it vital to note that the English word miaow is two-ways ambiguous. Compare That sound was a miaow with A cat miaowed, and note that you can't say *That sound miaowed or *That cat was a miaow. Where the subject of miaow describes some animate agent, the verb actually means `cause a miaow sound'.

It is certainly interesting that the auditory system also separates `where' and `what' streams. But the facts of audition do not fit so closely with the intuitions, canonically involving categorizable enduring objects, which I believe gave rise to the invention by logicians of predicate-argument notation. The idea of zero-place predicates has generally been sidelined in logic (despite their obvious applicability to weather phenomena); and the extension of predicate-argument notation to include event variables is relatively recent. (A few visual predicates, like that expressed by English flash, are more like sounds, but these are highly atypical of visual predicates.)

We have now considered both visual and auditory perception, and related them to object-location motor responses involving eye-movement, head-movement, body movement, and manual grasping. Given that when the head moves, the eyes move too, and when the body moves, the hands, head and eyes also move, we should perhaps not be surprised to learn that the brain has ways of controlling the interactions of these bodyparts and integrating signals from them into single coherent overall responses to the location of objects. Given a stimulus somewhere far round to one side, we instinctively turn our whole body toward it; if the stimulus comes from not very far around, we may only turn our head; and if the stimulus comes from quite close to our front, we may only move our eyes. All this happens regardless of whether the stimulus was a heard sound or something glimpsed with the eye. Furthermore, as we turn our head or our eyes, light from the same object falls on a track across the retina, yet we do not perceive this as movement of the object. Research is beginning to close in on the areas of the brain that are responsible for this integrated location ability. Duhamel et al. (1992) found that the receptive fields of neurons in lateral intraparietal cortex are adjusted to compensate for saccades.

``One important form of spatial recoding would be to modulate the retinal information as a function of eye position with respect to the head, thus allowing the computation of location in head-based rather than retina-based coordinates. ... by the time visual information about spatial location reaches premotor areas in the frontal lobe, it has been considerably recalibrated by information derived from eye position and other non-retinal sources.'' (Milner and Goodale (1995):90)The evidence that Milner and Goodale (1995) cite is from Galletti and Battaglini (1989), Andersen et al. (1985), Andersen et al. (1990) and Gentilucci et al. (1983). Brotchie et al. (1995) present evidence that in monkeys

`` ... the visual and saccadic activities of parietal neurons are strongly affected by head position. The eye and head position effects are equivalent for individual neurons, indicating that the modulation is a function of gaze direction, regardless of whether the eyes or head are used to direct gaze. These data are consistent with the idea that the posterior parietal cortex contains a distributed representation of space in body-centred coordinates'' (Brotchie et al. (1995):232)Gaymard et al. (2000) report on a pathological human case which ``supports the hypothesis of a common unique gaze motor command in which eye and head movements would be rapidly exchangeable.'' (819) Nakamura (1999) gives a brief review of this idea of integrated spatial representations distributed over parietal cortex. Parietal cortex is the endpoint of the dorsal stream, and neurons in this area both respond to visual stimuli and provide motor control of grasping movements (Jeannerod et al. (1995)). In a study of vision-guided manual reaching, Carrozzo et al. (1999) have located a gradual transformation from viewer-centered to body-centered and arm-centered coordinates in superior and inferior parietal cortex. Graziano et al. (1997) discovered `arm+visual' neurons in macaques, which are sensitive to both visual and tactile stimuli, and in which the visual receptive field is adjusted according to the position of the arm. Stricanne et al. (1996) investigated how lateral intraparietal (LIP) neurons respond when a monkey makes saccades to the remembered location of sound sources in the absence of visual stimulation; they propose that ``area LIP is either at the origin of, or participates in, the transformation of auditory signals for oculomotor purposes.'' (2071) Most recently, Kikuchi-Yorioka and Sawaguchi (2000) have found neurons which are active both in the brief remembering of the location of a sound and in the brief remembering of the location of a light stimulus. A further interesting connection between visual and auditory localization comes from Weeks et al. (2000), who find that both sighted and congenitally blind subjects use posterior parietal areas in localizing the source of sounds, but the blind subjects also use right occipital association areas originally intended for dorsal-stream visual processing. Egly et al. (1994) found a difference between left-parietal-lesioned and right-parietal-lesioned patients in an attention-shifting task.

The broad generalization holds that the dorsal stream provides very little of all the information about an object that the brain eventually gets, but just about enough to direct attention to its location and enable some motor responses to it. The ventral stream fills out the picture with further detailed information, enough to enable a judgement by the animal about exactly what kind of object it is dealing with (e.g. flea, hair, piece of grit, small leaf, shadow, nipple, or in another kind of situation brother, sister, father, enemy, leopard, human). A PET scan study (Martin et al. (1996)) confirms that the recognition of an object (say, as a gorilla or a pair of scissors) involves activation of a ventral occipitotemporal stream. The particular properties that an animal identifies will depend on its ecological niche and lifestyle. It probably has no need of a taxonomy of pieces of grit, but it does need taxonomies of fruit and prey animals, and will accordingly have somewhat finely detailed mental categories for different types of fruit and prey. I identify such mental categories, along with non-constant properties, such as colour, texture and movement, which the ventral stream also delivers, with predicates.

2.2 `Dumb' attentional mechanisms and the object/property distinction

Some information about an object, for example enough about its shape and size to grasp it, can be accessed via the dorsal stream, in a preattentive process. The evidence cited above from optical size illusions in normal subjects shows that information about size as delivered by the dorsal stream can be at odds with information about size as delivered by the ventral stream. Thus we cannot say that the two streams have access to exactly the same property, `size'; presumably the same is true for shape. Much processing for shape occurs in the ventral stream, after its divergence from the dorsal stream in V1 (Gross (1992)) ; at the early V1 stage full shapes are not represented, but rather basic information about lines and oriented edges, as Hubel and Wiesel (1968) first argued, or possibly about certain 3D aspects of shape (Lehky and Sejnowski, 1988). Something about the appearance of an object in peripheral vision draws attention to it. Once the object is focally attended to, we can try to report the `something' about it that drew our attention. But the informational encapsulation (in the sense of Fodor (1983)) of the attention-directing reflex means that the more deliberative process of contemplating an object cannot be guaranteed to report accurately on this `something'. And stimuli impinging on the retinal periphery trigger different processes from stimuli impinging on the fovea. Thus it is not clear whether the dorsal stream can be said to deliver any properties, or mental predicates, at all. It may not be appropriate to speak of the dorsal stream delivering representations (accessible to report) of the nature of objects. Nevertheless, in a clear sense, the dorsal stream does deliver objects, in a minimal sense of `object' to be discussed below. What the dorsal stream delivers, very fast, is information about the egocentric location of an object, which triggers motor responses resulting in the orientation of focal attention to the object. (At a broad-brush level, the differences between preattentive processes and focal attention have been known for some time, and are concisely and elegantly set out in Ch.5 of Neisser (1967).) In a functioning high-level organism, the information provided by the dorsal and ventral streams can be expected to be well coordinated (except in the unusual circumstances which generate illusions). Thus, although predicates/properties are delivered by the ventral stream it would not be surprising if a few of the mental predicates available to a human being did not also correspond at least roughly to information of the type used by the dorsal stream. But humans have an enormous wealth of other predicates as well, undoubtedly accessed exclusively via the ventral stream, and bearing only indirect relationships to salient attention-drawing traits of objects. Humans classify and name objects (and substances) on the basis of properties at all levels of concreteness and salience. Landau et al. (1988), Smith et al. (1996), Landau et al. (1998a) and Landau et al. (1998b) report a number of experiments on adults' and children's dispositions to name familiar and unfamiliar objects. There are clear differences between children and adults, and between children's responses to objects that they in some sense understand and to those that are strange to them. Those subjects with least conceptual knowledge of the objects presented, that is the youngest children, presented with strange objects, tended to name objects on the basis of their shape. Smith et al. (1996) relate this disposition to the attention-drawing traits of objects: